- Log in to:

- Community

- DigitalOcean

- Sign up for:

- Community

- DigitalOcean

AI/ML Technical Content Strategist

Why Platform Security Feels Different For AI Workloads

Every AI platform says some version of the same thing: we take security seriously. That is easy to say. It is a lot more important once your app is handling internal docs, source code, or customer data. What we wanted to know was simpler: before we sent sensitive context through one of these platforms, what could we actually verify for ourselves? So we built a simple private-document chatbot and ran the same basic workflow across multiple inference platforms. The test was straightforward: upload a document, index it locally, ask questions against it, then delete it. We paired that hands-on work with documentation review, focusing on the security areas that seemed most likely to matter in practice: access controls, retention, isolation, logging, disclosure, and shared responsibility.

The most useful result was not a checklist winner. It was figuring out which platform made its security story easiest to check with my own eyes.

Our Methodology: Research Plus Hands-On Verification

We tested six platforms: DigitalOcean, Baseten, Nebius, Fireworks AI, Modal, and Together AI, and evaluated each platform in two ways. First, we reviewed public documentation for key security topics: identity and access, retention and deletion, isolation, auditability, disclosure posture, and shared responsibility. Then we tested those claims by wiring up the same private-document workflow across the platforms we could access directly.

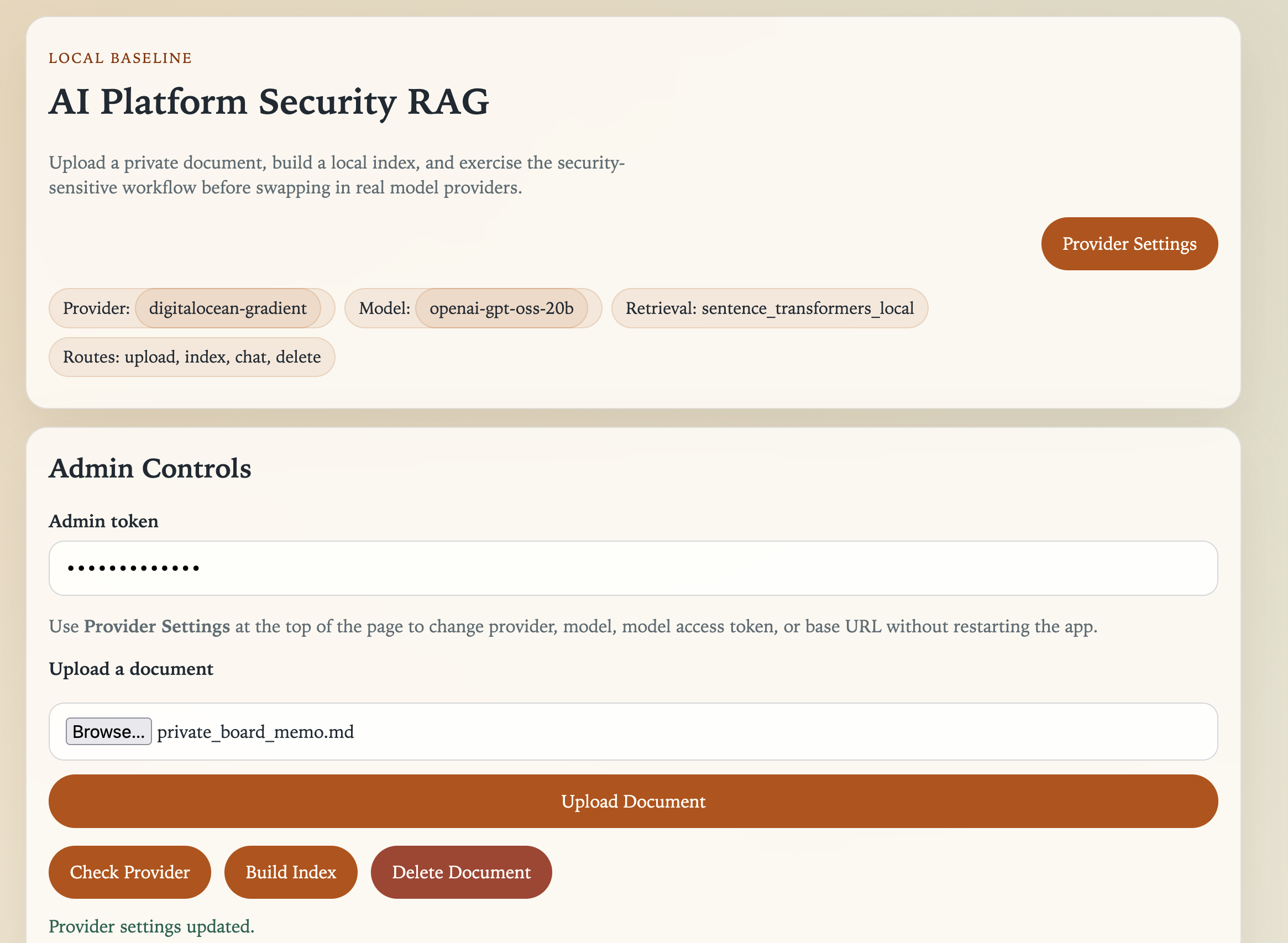

Figure 1: The local RAG test harness used across all platforms. Retrieval remained local; only selected chunks were sent to inference providers.

I kept the app simple on purpose. It did four things: upload a document, index it locally, answer questions, and delete the document afterward. The document was short, and only contained the information we asked about. We also planted a canary phrase to check whether responses were really grounded in the uploaded file, and we used a deletion marker to see whether that file still influenced answers after we removed it.

Just as importantly, retrieval stayed local throughout the comparison. That meant the inference provider only received the user’s question plus the top retrieved chunks, not the full source document by default. This kept the test focused on the inference platform rather than on differences in hosted vector databases or retrieval stacks.

For each run, we captured configuration behavior, model visibility, request metadata, response success, latency, and delete-path outcomes. Throughout the article, we distinguish between what we could verify directly in those runs and what we only found in public documentation.

The Security Touchpoints That Actually Mattered

Six security questions ended up mattering more than anything else:

- What kind of credential do you actually get, and how narrowly can you scope it?

- After a request finishes, what actually gets stored—prompts, outputs, logs, or nothing at all?

- Can you isolate workloads (VPCs, dedicated deployments, BYOC) without talking to sales?

- What logs do you get, and are they actually accessible as a self-serve user?

- Does the platform invite outside scrutiny through a bug bounty or disclosure program?

- Is the shared responsibility model clear enough to act on, or do you have to infer it?

These questions turned out to be more useful than any single compliance checklist, because they map directly to what you can verify before sending real data through a system.

AI Inference Platform Security Overview

We looked at 6 platforms in this review: DigitalOcean, Baseten, Nebius, Fireworks AI, Modal, and Together AI. This section covers everything we learned about these platforms through documentation research and initial tests.

DigitalOcean

It is not just an inference platform; it is a broader cloud platform with AI inference layered onto mature infrastructure. Being a hybrid, cloud-native and inference-native, platform gives DigitalOcean some advantages the inference-native vendors don’t really have yet: self-service VPCs, a mature public bug bounty program, product-level shared responsibility docs, and a more complete account security model. In practice, that made it easier to answer basic questions early, before we had done much more than create credentials and run a few test calls.

The VPC situation is the clearest example. Every other platform in this review that offers VPC or BYOC support gates it behind a sales process. On DigitalOcean, private networking is a standard dashboard feature, where you can provision it the same way you’d spin up a Droplet, with no contract negotiation required. For a team that wants network isolation early rather than after the first security review, that’s a meaningful practical difference. The bug bounty program has been running on Intigriti since 2020, paid out over $63,000 to researchers in the past year, and scales up to $8,000 for critical findings. That’s direct evidence that DigitalOcean has spent years inviting outside scrutiny and paying for it. The Imperva research that surfaced DigitalOcean’s activity log enrichment issue came through exactly that mechanism, which is worth acknowledging: the gap was found and disclosed publicly because the program exists. No other platform in this comparison has a comparable track record.

The shared responsibility documentation is also the most thorough in the group. Rather than one generic overview page, each major service - Droplets, Spaces, Volumes, Managed Databases, App Platform, Networking - has its own dedicated page explaining exactly what DigitalOcean secures and what the customer still owns. That specificity matters when you’re trying to make an actual deployment decision rather than satisfy a checkbox.

The caveats are real and worth naming directly. DigitalOcean doesn’t hold a company-level ISO 27001 certification. Each of its underlying data center facilities do, but the company entity itself doesn’t, which may be be a procurement blocker for enterprise buyers with strict compliance requirements. The Gradient AI data retention story is also stronger for open-source models than for third-party ones: for models like Llama, Mistral, and DeepSeek, inference data doesn’t touch DigitalOcean’s storage. But OpenAI models accessed through Gradient don’t support zero data retention, which means those requests are subject to OpenAI’s own retention policies rather than DigitalOcean’s. If your workload needs strict ZDR and you’re routing through a third-party model, you need to know that before you start. Finally, the activity log enrichment gap mentioned above: logs currently record a user’s full name rather than a unique identifier, which makes spoofing and forensic attribution harder than it should be.

Our experience using DigitalOcean was smooth: the documented authentication system worked well with SSO to quickly set up a profile. Once we added a payment method, we were able to quickly identify and navigate to the Agent Platform using the sidebar, click into Serverless Inference, create our model access key, and immediately get started. It’s also worth noting that in my past experience on the platform, we did not have to guess whether private networking was a paper feature or a real one we could turn on ourselves.

Baseten

The most important thing about Baseten is also the simplest: by default, it does not store your inference data. On several other platforms, you have to dig through settings or product docs to figure out whether retention is on by default. With Baseten, the privacy-protective behavior is the starting point, which is exactly where you want it if you are sending sensitive RAG context through the API.

The API key design reinforces that posture. Baseten’s team keys can be scoped to inference-only, metrics-only, or full access, and can be further restricted by environment and by model. That’s a meaningful least-privilege implementation that most platforms don’t match — you can issue a key that is literally incapable of doing anything other than running inference against one specific model in one specific environment. In practice, that means a compromised inference key can’t be used to exfiltrate training data, modify deployments, or access billing. Nebius’s AI Cloud has a comparably sophisticated auth design using short-lived IAM tokens, but for a pure inference use case, Baseten’s scoping model is the most practical implementation of least-privilege in the group.

The hosting architecture gives customers a clear escalation path as their security requirements grow. The progression from shared cloud to single-tenant cluster to fully self-hosted VPC isn’t just a sales tier structure — each step meaningfully changes the isolation model. Single-tenant clusters use dedicated Kubernetes namespaces with Calico network policies and Falco runtime security. The self-hosted option puts the entire stack inside the customer’s own infrastructure. That progression means a team can start on shared infrastructure and migrate toward stricter controls without switching platforms, which is harder to do when the VPC option lives on a different vendor entirely.

The gaps are worth being direct about. Baseten has no public bug bounty program and no formal vulnerability disclosure policy — if a security researcher finds something, there’s no structured channel to report it beyond a generic contact address. That’s not evidence of weak internal security, but it does mean the platform hasn’t invited the same level of external scrutiny as DigitalOcean or Modal. There’s also no ISO 27001 certification in public materials, which matters in some enterprise procurement contexts. And while Baseten’s SOC 2 Type II report confirms MFA as an implemented control for internal access, customer-facing MFA enforcement isn’t clearly documented publicly — so whether you can require 2FA across your team is a question worth asking directly before committing to the platform for a regulated workload.

Nebius

Nebius is the platform you point to when someone asks which provider has done the most formal compliance work. Its certification portfolio is the broadest in this comparison by a significant margin — SOC 2 Type II audited by Deloitte, company-level ISO 27001 (plus 27701, 27799, 22301, 27018, and 27032), HIPAA, GDPR, CCPA, NIS2, and DORA. For teams in regulated industries where procurement requires checking specific boxes, Nebius checks more of them than anyone else in this review, and the Deloitte auditor relationship gives those certifications more weight than a less recognizable firm would.

The governance infrastructure behind those certifications is also more explicitly documented than most competitors. Nebius publishes a formal shared responsibility matrix on its Trust Center that breaks down security ownership layer by layer — not a single generic overview page, but a structured mapping of what the platform owns versus what the customer still has to configure. That specificity is useful when you’re actually trying to make a deployment decision rather than just satisfy a checkbox. It puts Nebius alongside DigitalOcean as the two platforms where the shared responsibility model is concrete enough to act on rather than interpret.

Two other features stand out as genuinely differentiated. The MysteryBox native secrets vault means customers don’t have to wire up a separate secrets management service — credentials, API keys, and sensitive configuration live in an encrypted vault that’s part of the platform rather than bolted on. And Nebius explicitly claims immutable, timestamped audit logs, which is a stronger public commitment than most of the other platforms make. Immutability matters for compliance and incident investigation: logs that can be modified after the fact are worth less than logs that can’t. Nebius is the only platform in this comparison that makes that claim explicitly in public documentation.

The caveat that matters most for AI workloads is about data retention defaults in Token Factory, which is the managed inference product most developers will actually use. Token Factory stores prompts and outputs by default to support speculative decoding — a performance optimization that requires retaining recent context. Zero data retention is available, but it has to be explicitly enabled. That’s the opposite posture from Baseten and Fireworks, where no storage is the default. It’s not a disqualifying gap, but it’s the kind of thing that bites teams who assume privacy-protective defaults and don’t check. If ZDR matters for your workload on Nebius, turning it on is the first thing you should do after provisioning, not something to revisit later.

Fireworks AI

Fireworks has the strongest default data retention posture of any platform in this review for standard inference. Zero data retention is on by default — you don’t have to find a setting, enable a feature, or negotiate a contract addendum to avoid having your prompts stored. For teams that are sending sensitive context through an inference API and want the simplest possible privacy guarantee, that default is genuinely valuable and meaningfully better than most of the competition.

The isolation options are also credible. Dedicated deployments give customers their own GPU capacity rather than shared infrastructure, and BYOC on AWS puts the inference stack inside the customer’s own cloud environment entirely. Those paths exist for teams that need stronger boundaries than a shared serverless endpoint provides, though both require engaging with sales rather than provisioning through a dashboard.

There’s one retention default that’s easy to miss and worth flagging explicitly. The standard inference API is zero-retention by default, but the Response API — which handles stateful, multi-turn conversation — retains data for 30 days unless you explicitly set store=false. That’s a meaningful distinction for anyone building a conversational application rather than a single-turn query flow. The two products behave differently by default, and nothing in the standard onboarding flow makes that obvious.

Audit logs are the other significant gap, and it’s a structural one: they’re enterprise-only. A self-serve team on Fireworks doesn’t get access to audit logging at all, which limits how much you can actually inspect about what’s happening with your requests. For smaller teams that still need some visibility into access and usage — which is most teams — that’s a real limitation that the compliance certification story doesn’t compensate for. The public vulnerability disclosure posture is also thin: there’s no public bug bounty, no formal VDP page, and no visible security contact address, which means outside researchers have no structured channel to report findings.

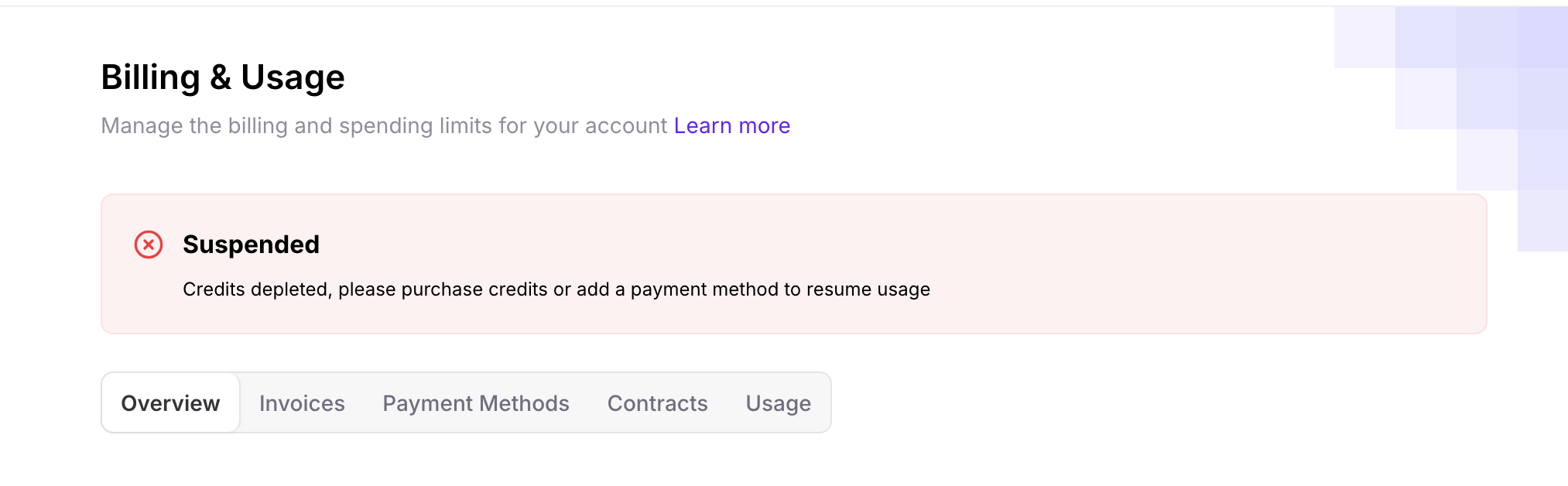

Figure 2: Billing inconsistency encountered during testing, illustrating gaps between documented and actual platform behavior.

The signup and billing flow also made me trust the platform less than the docs did. Fireworks offered a survey-for-credits promotion during onboarding, but when we followed the steps and added a credit card, the promised credits disappeared. We had to buy credits separately to keep testing, then hit another bug that left me with an odd balance and no reliable way to set the spending cap where we wanted it. When we went to shut the test instance down, the UI froze. None of those are security vulnerabilities by themselves. But they did change how much confidence we had in the platform’s operational polish, and that matters when the broader argument is about whether documented behavior matches real behavior.

Modal

Modal’s security story is the most technically distinctive in this comparison, but it’s also the most misunderstood if you approach it expecting a conventional cloud security model. Modal doesn’t offer a customer VPC. There’s no BYOC path. You can’t put Modal workloads inside your own private cloud infrastructure. If those are hard requirements, Modal isn’t the right platform. But if your threat model centers on workload isolation at the execution layer rather than network perimeter control, Modal has built something more interesting than most of the competition.

The foundation is gVisor, Google’s user-space kernel sandbox, which Modal applies to all compute by default. Rather than sharing a kernel with other tenants the way standard container runtimes do, gVisor interposes a separate kernel implementation between the container and the host, which means a compromised workload can’t reach the host kernel directly. That’s a meaningfully stronger isolation guarantee than namespace separation alone, and it applies to every Modal workload without any customer configuration required. For teams running arbitrary code or third-party model artifacts — the scenarios where shared-kernel isolation is most concerning — that default matters.

The sandbox network controls add another layer. Modal lets you explicitly block network access for individual functions, or allowlist specific CIDR ranges, which means you can run inference workloads with no outbound internet access unless you’ve deliberately permitted it. That’s a fine-grained control that most platforms don’t expose at the function level, and it’s useful for containing what a compromised or misbehaving workload can reach.

Modal is also one of only two platforms in this review with a public bug bounty program, and the only one that publishes explicit remediation SLAs: critical findings within 24 hours, high within a week, medium within a month, low within three months. Publishing those commitments is a meaningful signal — it means Modal has made a public promise about how it responds to security reports, not just an internal one. Combined with Datadog and OpenTelemetry integrations and audit log export, the observability story is also more operationally complete than several platforms with stronger compliance certifications.

The compliance portfolio is the honest gap. Modal holds SOC 2 Type II and HIPAA, but with carve-outs worth reading carefully — Volumes v1, memory snapshots, and user code itself are explicitly outside the HIPAA BAA scope. ISO 27001 is absent. GDPR and CCPA aren’t prominently documented. For teams in regulated industries running procurement checklists, Modal will come up short against Nebius and potentially DigitalOcean. The no-BYOC, no-customer-VPC constraint is also a genuine architectural limit rather than a roadmap gap — it’s a deliberate design choice, which means it’s unlikely to change. Teams that need their inference infrastructure inside their own network perimeter should look elsewhere.

Teams that need strong execution-layer isolation and are comfortable with Modal’s infrastructure model will find it hard to match elsewhere in this set.

Together AI

Together AI is the broadest platform in this comparison — inference API, GPU cloud, fine-tuning, evaluations — and that breadth shows in the security documentation in places. The encryption specifications are among the most explicit in the group: AES-256 at rest, TLS 1.3 for external connections, TLS 1.2 for internal traffic. Most platforms confirm encryption exists without specifying the implementation; Together actually names the versions and algorithms, which makes it easier to evaluate rather than trust on faith. The VPC deployment documentation is also unusually public for an enterprise feature — architecture diagrams, a published responsibility matrix comparing cloud versus VPC deployment, and explicit data sovereignty guarantees are all available without a sales conversation. That transparency is worth noting because most platforms at this tier bury VPC details behind a contact form.

The DLP mention is unique in this comparison. Together is the only platform that explicitly documents a data loss prevention solution as part of its security stack. That’s relevant for teams building applications that might inadvertently route sensitive data — PII, credentials, confidential records — through an inference pipeline. Whether the implementation is as robust as the mention suggests is harder to verify from public documentation alone, but the fact that it’s documented at all puts Together ahead of every other platform in the group on this specific dimension.

The default data retention behavior is the most important thing to understand before sending anything sensitive. Unlike Baseten and Fireworks, Together stores prompts and responses by default. Zero data retention is available, but it requires going into Privacy and Security settings and explicitly opting out — and it only applies from the moment you enable it, with no retroactive effect on previously processed data. That’s a meaningful gap between documented capability and safe default. The SOC 2 Type II certification is also the most recently achieved in the group, announced in July 2025, which means the compliance program is real but has less of a track record than platforms that have been through multiple audit cycles. The public vulnerability disclosure posture is thin: no bug bounty, no formal VDP, no visible security contact address beyond a general privacy email.

The onboarding experience added its own layer of friction. Together’s signup flow presents what appears to be a required five dollar payment to access the platform, with no obvious indication that free credits are available or that the payment is optional. After completing the payment — which took several minutes to process — five additional dollars in credits appeared automatically. The intended logic seems to be that paying triggers a credit match, but the UX presents it as a straightforward purchase with no mention of the match until after the transaction clears. It’s a minor billing confusion rather than a security issue, but it’s worth flagging for the same reason the Fireworks billing bugs were: a platform where basic transactional flows are this opaque is one where you should read the retention defaults and data handling terms carefully rather than assuming the documented behavior matches the actual experience.

The Security Touchpoints

Secrets And Identity

For each platform, we looked at four things: how easy it was to obtain the right key, whether that key could be narrowly scoped, whether it could be validated before sending sensitive prompts, and how mature the surrounding identity controls felt, including SSO, RBAC, and MFA.

| Platform | How easy was it to get the right credential in the first place? | Were keys scoped or monolithic? | Could we validate the key before sending sensitive prompts? | Did the platform support SSO, RBAC, and MFA in a way that felt real or enterprise-gated? |

|---|---|---|---|---|

| Baseten | Moderate. Docs were clear that there are personal keys for local work and team keys for production, but you do have to choose the right type up front. | Strongly scoped. Team keys can be full, inference-only, or metrics-only, and can also be scoped by environment and model. | Yes in the demo. The app could check /v1/models before chat, so we could confirm the key and model before sending private context. | Real, but enterprise-leaning. SSO and RBAC are documented; MFA is not clearly documented publicly, and SSO appears tied to enterprise plans. |

| Modal | Moderate. Workspace token creation looks straightforward, but the model is more “long-lived workspace tokens plus service users” than narrowly scoped inference credentials. | Partly scoped, but not as tight as Baseten. Tokens are workspace-scoped; service users and restricted environments add control, but the tokens are still long-lived. | Unclear from the documentation. The docs clearly describe token management, but not a simple preflight “validate this inference credential before first prompt” flow like in the provider-check. | Real, but some controls are enterprise-gated. RBAC is enterprise-tier, Okta SAML is documented, and customer MFA is mostly inherited from the IdP. Internal MFA posture is strong. |

| Fireworks AI | Moderate. Human access looks easy because Google SSO is the default, but programmatic access is more role-and-service-account driven. | Better than monolithic, but less explicitly granular than Baseten. Service accounts and roles exist, including inference-user, which is useful. | Not independently verified in the docs. It likely supports a preflight check on OpenAI-compatible endpoints, but we would not call that proven yet. | Real, but mixed. SSO is a genuine first-class pattern, RBAC is documented, but custom SSO is enterprise add-on territory and MFA depends on the IdP rather than a native platform control. |

| Together AI | Moderate. OAuth login is easy, and project-level key structure is a plus, but the org/project setup adds a little overhead. | Strongly scoped. Project-level API keys are the default unit, which is better than one account-wide master key. | Not independently verified in documentation. The structure suggests it should be possible, but you have not proven that workflow hands-on yet. | Real, but enterprise-assisted. SSO, RBAC, and MFA are documented, but SSO is for Scale/Enterprise and is not yet self-serve. |

| Nebius | Mixed. Token Factory looks relatively easy because it uses static API keys; AI Cloud is more complex because it uses short-lived IAM token exchange, but it is also the strongest auth design. | AI Cloud is best-in-class on paper: short-lived bearer tokens, service accounts, and IAM roles. Token Factory is simpler and more static. | Partly. AI Cloud credentials can be validated through the token-exchange flow itself, but documentation do not have an easy-to-find, pre-prompt model-visibility check for Token Factory. | Real and mature overall. SSO and RBAC are clearly documented, especially with Entra ID on AI Cloud, but customer-enforced MFA is less clearly documented than the rest. |

| DigitalOcean | Moderate. The main friction is knowing that Gradient inference uses a model access key, not just a regular DigitalOcean cloud token. Once you know that, it is straightforward. | Improved and reasonably scoped. DigitalOcean now has custom token scopes, and in practice the Gradient model access key split is better than one giant all-purpose credential. | Yes in the demo. The app-level provider-check validated the key and confirmed model visibility before chat. | Real and comparatively accessible. Scoped tokens, RBAC, SSO, and team-enforced 2FA are all documented. It feels less enterprise-gated than most of the inference-focused platforms, though there are some RBAC caveats worth noting. |

This turned out to be one of the clearest areas of differentiation. DigitalOcean offers scoped API tokens for the broader platform, along with RBAC, SSO, and team-enforced 2FA, which makes the baseline identity story feel solid. In our hands-on testing, it was also possible to validate the configured key and model before sending prompts, which reduced some of the operational uncertainty. The main limitation, from an inference-security perspective, is that we did not find the same kind of fine-grained model-key scoping that some competitors expose. There is room for DigitalOcean to go further here with more granular model access controls, such as inference-only or metrics-only scopes.

Baseten stood out for the strongest key-scoping model in the group. Its team keys can be limited to inference-only or metrics-only access, and can also be scoped by environment and by model. That is a meaningful least-privilege advantage for production teams. Nebius took a different but equally compelling approach on AI Cloud, where service accounts and short-lived IAM tokens create a more mature authorization model than static API keys, even if Token Factory itself uses a simpler API-key pattern for inference.

The rest of the field landed somewhere in between. Fireworks documents strong role separation and service accounts, but MFA appears to depend largely on the identity provider rather than a platform-native control. Together supports project-scoped API keys and enterprise SSO, though some of that setup still appears to be team-assisted rather than fully self-serve. Modal also has a thoughtful identity model, with service users and restricted environments that help separate production from development, but because Modal is a broader serverless compute platform rather than a pure inference API, its secrets and identity model sits in a somewhat different category.

Data Flow, Retention, And Deletion

This app keeps the full uploaded document, chunking, embeddings, and retrieval pipeline local, then sends only the user’s question plus the top retrieved document chunks to the inference provider for answer generation. In other words, it does not upload the whole source document by default; it only transmits the small slice of context selected at query time. This means minimal information can actually be stored by the inference provider after the fact, but what actually gets stored seems to vary from provider to provider.

Here, the clearest differentiator is whether zero data retention is the default, optional, or unavailable. Baseten has the cleanest public no-storage posture by default. Fireworks is also strong by default for standard inference, though its Response API retains conversation data for 30 days unless store=false is explicitly set. Nebius is more nuanced: Token Factory stores prompts and outputs by default to support speculative decoding unless Zero Data Retention is turned on. Together follows a similar opt-out model, storing prompts and responses by default unless users disable that behavior in settings. DigitalOcean’s Gradient story is strong for open-source models, but requests routed to OpenAI models through Gradient do not support zero data retention, which is an important caveat for privacy-sensitive workloads. Modal lands somewhere in the middle: it deletes function inputs and outputs after retrieval, but with a maximum seven-day TTL, it is not truly zero-retention.

Network Isolation And Deployment Control

The next question is where these workloads can actually live, and how much network control a customer gets without a sales process. On that front, DigitalOcean stands out for making private networking broadly accessible instead of treating it as an enterprise-only upgrade. VPC is a standard self-service feature, DOKS exposes network policies, and App Platform adds another layer of isolation through Kata Containers. That combination makes DigitalOcean unusually practical for teams that want private-network controls early, without first negotiating for them.

Nebius is arguably the strongest infrastructure story on paper, especially for larger enterprise or regulated environments. Its platform includes VPC support, InfiniBand isolation for AI-specific traffic, VM-level Kubernetes isolation, and a more explicit shared-responsibility model than most competitors. Baseten also offers a credible progression from shared cloud to single-tenant deployments to self-hosted VPC, which gives customers a clear security escalation path, but the highest-control version is still enterprise-led rather than truly self-serve. Together lands in a similar place: its VPC deployment model is documented more openly than many competitors, which is a real transparency win, but it is still something customers enable through an enterprise relationship rather than a standard dashboard flow.

Fireworks and Modal illustrate two different tradeoffs. Fireworks supports dedicated deployments and BYOC for stronger isolation, but the highest-control path remains sales-gated, which limits how accessible those controls are to smaller teams. Modal, by contrast, has the strongest sandboxing story in the group through gVisor and fine-grained network controls, giving it unusually strong workload isolation at the container level. But that strength comes with a major constraint: there is no BYOC model and no customer VPC option, so teams that require infrastructure inside their own private cloud simply do not have that path on Modal.

Logging, Auditability, And Evidence

Logging was one of the areas where the difference between paper posture and practical visibility really showed up. Nebius was the strongest on paper: it is the only platform here that clearly and publicly claims immutable, timestamped audit logs. Baseten also looked solid, but the public materials were less specific about how durable or tamper-resistant those logs actually are. Together says audit logging exists, but the docs are thinner, and we came away less sure how useful those logs would be in a real incident or audit.

Fireworks highlights another important distinction: some platforms have meaningful audit features, but not for everyone. Fireworks documents audit logs and data-access logging, which is a real strength, but those capabilities are effectively enterprise-gated rather than broadly available to self-serve users. That matters because smaller teams often still need visibility into who accessed what, even if they are not on a large contract. Modal stands out in a different way. Its biggest strength is not necessarily the most audit-specific language, but the surrounding observability ecosystem: documented integrations, stronger export paths, and unusually explicit public vulnerability remediation SLAs. That makes its logs feel more operationally useful, even if the public documentation says less about immutability than Nebius does.

DigitalOcean landed in the middle, but in a practically useful way. Its account security history, team activity logs, and third-party integrations provide enough visibility to make the platform feel operationally understandable, even if the Gradient-specific audit picture is lighter than some competitors’ platform-wide security claims. In our demo, DigitalOcean also exposed request-level metadata such as response headers and request IDs, which made it easier to reason about what happened during inference calls. That said, the activity log enrichment gap is worth noting: public research has already shown that some identity details in DigitalOcean’s logs are not as rich as they could be, which matters if you are thinking about incident investigation or strong attribution.

Vulnerability Disclosure And Security Transparency

Vulnerability disclosure is one of the clearest places where public security maturity separates these platforms. DigitalOcean stands out with the most mature public bug bounty program in the group, giving outside researchers a formal channel to report issues and showing a stronger willingness to invite scrutiny rather than just describe internal controls. Modal is the only other platform that comes close on this dimension, with a clearly public bug bounty and public remediation SLAs that make its response posture more visible than most peers. By contrast, Nebius, Baseten, Fireworks, and Together all show signs of serious internal security work, including compliance efforts, pentesting, and documented controls, but they do not expose the same level of formal public disclosure infrastructure. In practice, that means they communicate security mostly through trust materials and internal governance signals, while DigitalOcean and Modal do more to demonstrate how they handle outside security feedback in the open.

Shared Responsibility

Shared responsibility matters because these platforms do not secure everything for you, and the quality of their documentation affects whether customers can make informed deployment choices. DigitalOcean does the best job overall of explaining this at a practical level, with product-by-product shared responsibility documentation that makes it clear how the boundary shifts across services. Nebius is the strongest in formal structure, with the clearest platform-level matrix spelling out what the provider owns and what the customer still has to configure. Together also gives customers something useful here by explicitly splitting responsibilities between its cloud and VPC deployment models. Baseten and Fireworks are less concrete: the responsibility model can be inferred from their architecture and terms, but it is not laid out as clearly in one place. Modal does document shared responsibility, but in a narrower way that focuses more on backup, recovery, and application-level use than on a full layer-by-layer security split. Overall, the difference is not just documentation quality for its own sake; the more concrete the model is, the easier it is for a team to decide whether a managed deployment is enough or whether they need a VPC, BYOC, or tighter internal controls.

What The Demo Changed About My Conclusions

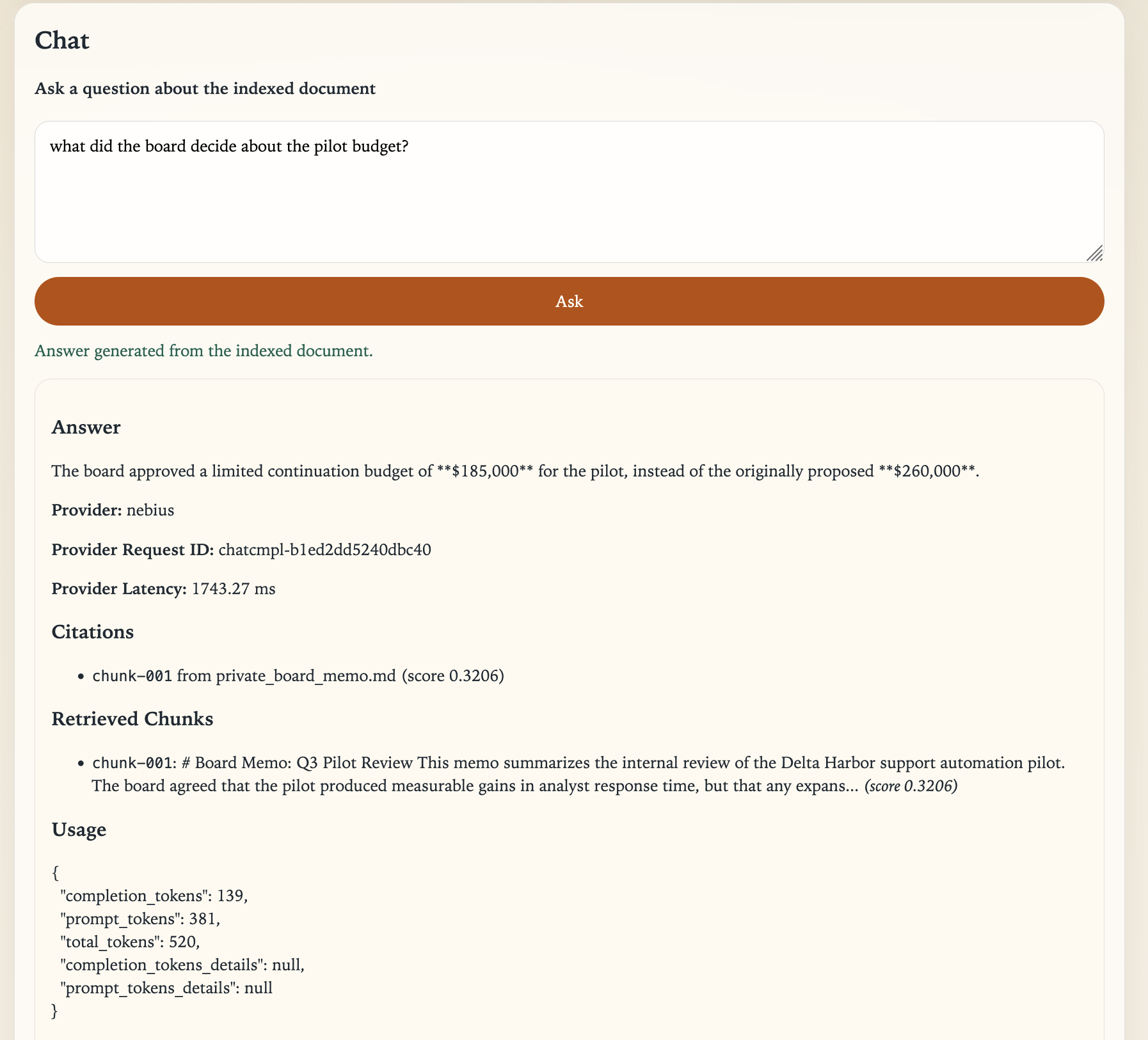

Figure 3: Example response showing grounded answer, retrieved chunks, and request-level metadata used for verification.

Here is what the test runs actually produced. The most useful details were setup friction, credential issues, latency, and any strange failure modes. Note that this part of the review didn’t include Modal. Their technical offerings are slightly different from the others, and, while no less relevant, didn’t fit this demo strategy exactly. We still included it in the earlier review for purposes of completeness, but this section does not include them.

| Provider | Model | Secrets UX | Model Available | Chat Success | Request IDs | Latency ms | Failure Note |

|---|---|---|---|---|---|---|---|

| baseten | openai/gpt-oss-120b | Clear distinction between personal and team keys, with unusually strong scoping for inference-only, metrics-only, environment-scoped, and model-scoped access. | True | True | chatcmpl-6bc40858c89d41e19c01e3156aeb5a8b | 2255.55 | Baseten has one of the strongest least-privilege API key stories in the group. The main caveat is that automatic key expiration is not enabled by default, so teams still need good rotation hygiene. |

| digitalocean-gradient | openai-gpt-oss-20b | Moderately clear once you know to use a model access key, but the split between model access keys and regular platform credentials is easy to miss on a first pass. | True | True | 7e383608-4f7f-4345-8927-5012ab660d6f | 1867.7 | The app-level provider check is useful here because it quickly confirms whether the supplied model access key can see the configured model. In this setup, only retrieved context is sent to DigitalOcean for generation, not the entire uploaded document. Good first platform to validate the comparison harness, but keep in mind this evidence so far reflects hosted generation plus local retrieval rather than a fully provider-hosted RAG stack. |

| fireworks-ai | fireworks/gpt-oss-20b | Reasonably straightforward once you have the API key, but the exact model identifier story is less intuitive than some peers and can require checking live model listings carefully. | False | True | chatcmpl-41eb95ba8df541b0bc8aceddd853b565 | 2653.0 | Fireworks has a solid auth model with service accounts and role separation, but the practical setup experience is a little less polished because the model IDs returned by /v1/models do not always line up with the shorthand names a user might expect. Fireworks was the hardest platform to configure cleanly in the test harness. The workflow eventually completed, but it still returned an unexplained ‘Model Available: False’ result, which lowered confidence in the setup path. |

| nebius | openai/gpt-oss-20b | Strongest on paper for cloud-native identity because AI Cloud uses short-lived IAM tokens and service accounts, but the platform split between AI Cloud and Token Factory makes the overall auth story more complex to reason about. | True | True | chatcmpl-901e5e93134ffb5e | 3269.83 | Nebius offers the most mature authorization design in the research set when using AI Cloud, but Token Factory uses a simpler API-key model. That split is powerful, though it adds conceptual overhead during setup. |

| together-ai | openai/gpt-oss-20b | Project-scoped keys are a genuine security improvement, and the OAuth-first human login model is sensible, though some enterprise identity setup still appears team-assisted rather than fully self-serve. | True | True | oeVdiD7-4YNCb4-9e9c80f7eae13915 | 3632.25 | Together’s secrets story is stronger than a simple account-wide API key model because keys are organized at the project level. The main UX tradeoff is that the more advanced identity setup does not feel entirely self-serve yet. |

The demo changed my conclusions in a pretty specific way. The documentation was still useful, but once we started wiring up the same workflow across providers, the things that mattered most shifted. We cared less about who had the most impressive trust-center language and more about very practical questions: could we validate the key before use, could we see request-level evidence, and how much friction showed up in routine setup and teardown?

That shift especially sharpened my view of DigitalOcean. The research had already suggested it was strong on self-service networking, shared responsibility clarity, and overall cloud security maturity. The demo did not overturn that, but it did refine it: what impressed me most was not a single headline security feature, but the fact that the platform was easy to validate in practice. We could confirm model visibility, inspect request behavior, and run the full retrieval-plus-generation flow without much ambiguity. That made DigitalOcean feel operationally trustworthy. After actually using it, we trusted it more than we did from the docs alone.

At the same time, the demo made me less confident in judging providers purely by polished documentation or formal claims. Some platforms with strong privacy or compliance stories still introduced confusing setup paths, unclear defaults, or avoidable UX friction once tested live. That does not erase their documented strengths, but it does matter for real adoption: security is not only about what a platform says it supports, but also about how confidently a builder can verify those claims while shipping an actual workload. The main conclusion, then, is more practical than before: the most trustworthy platform is not just the one with the strongest paper posture, but the one that makes its security properties easiest to observe and confirm firsthand.

Concluding Thoughts

If we were choosing based only on the trust-center checklist, this comparison would have a different winner. Nebius has the broadest compliance portfolio by a significant margin — company-level ISO 27001, Deloitte-audited SOC 2, NIS2, DORA, and certifications most of the other platforms haven’t started working toward. Baseten has the cleanest default retention posture, shipping zero-storage out of the box rather than requiring customers to find and enable it. Modal has the most technically interesting isolation story, with gVisor sandboxing and function-level network controls that go deeper than anything else in this group. On any individual dimension, one of those three platforms could reasonably claim the top position.

But the checklist isn’t the whole question. The more useful question for a builder wiring up a real workload is which platform makes its security properties easiest to observe and verify before anything sensitive is in flight. That’s a different test, and it produced a different answer.

DigitalOcean is the only platform in this review where we could provision private networking from a dashboard without a sales call, run a full inference workflow with request-level metadata visible, validate model access before sending any private context, and read a clear explanation of exactly what the platform secures versus what we still own - all without an enterprise contract. The bug bounty program has five years of history and over $63,000 paid to researchers in the past year alone. The shared responsibility documentation exists at the product level rather than as a single generic page. The auth stack includes scoped tokens, RBAC, SSO, and enforceable 2FA without a tier upgrade. None of those things are the most impressive single feature in the comparison, but together they describe a platform where security is operationally accessible rather than aspirationally documented.

That distinction matters more than it might seem. Several platforms in this review have strong security claims that are hard to verify without deeper access - audit logs gated behind enterprise tiers, VPC options that require a sales conversation, retention defaults that don’t match the marketing language once you read the fine print carefully. For a team that needs to make a deployment decision without first negotiating a contract, that gap between documented and verifiable is a real risk. DigitalOcean closes more of that gap than any other platform in this set.

The caveats are real and worth keeping in mind. If your procurement process requires a company-level ISO 27001 certification, Nebius is the only platform here that has one across the company level. If zero data retention by default is your primary requirement and you’re running standard inference rather than a full cloud workload, Baseten and Fireworks both have stronger defaults than DigitalOcean’s Gradient for third-party models. If your threat model centers on execution-layer isolation for arbitrary code rather than network perimeter control, Modal’s gVisor foundation is genuinely hard to match. This comparison isn’t arguing that DigitalOcean wins on every dimension - it doesn’t. What DigitalOcean does better than the rest is combine decent security depth with controls that are actually reachable in a self-serve workflow. That mattered more to me than we expected. If you are trying to evaluate a platform before sensitive data is already in motion, being able to check those boundaries yourself is a real advantage.

Thanks for learning with the DigitalOcean Community. Check out our offerings for compute, storage, networking, and managed databases.

About the author

Still looking for an answer?

This textbox defaults to using Markdown to format your answer.

You can type !ref in this text area to quickly search our full set of tutorials, documentation & marketplace offerings and insert the link!

- Table of contents

- Why Platform Security Feels Different For AI Workloads

- Our Methodology: Research Plus Hands-On Verification

- The Security Touchpoints That Actually Mattered

- AI Inference Platform Security Overview

- The Security Touchpoints

- What The Demo Changed About My Conclusions

- Concluding Thoughts

Join the many businesses that use DigitalOcean’s Gradient™ AI Inference Cloud.

Reach out to our team for assistance with GPU Droplets, 1-click LLM models, AI Agents, and bare metal GPUs.

Become a contributor for community

Get paid to write technical tutorials and select a tech-focused charity to receive a matching donation.

DigitalOcean Documentation

Full documentation for every DigitalOcean product.

Resources for startups and AI-native businesses

The Wave has everything you need to know about building a business, from raising funding to marketing your product.

Get our newsletter

Stay up to date by signing up for DigitalOcean’s Infrastructure as a Newsletter.

New accounts only. By submitting your email you agree to our Privacy Policy

The developer cloud

Scale up as you grow — whether you're running one virtual machine or ten thousand.

Start building today

From GPU-powered inference and Kubernetes to managed databases and storage, get everything you need to build, scale, and deploy intelligent applications.